Product Changeover Inspection Models on Edge Compute

Deploying per-variant inspection models directly to edge compute modules eliminates the round-trip latency and revalidation cost that central-server architectures impose on multi-variant lines.

The latency budget on a live production line is tighter than most people realize. When a barcode scanner reads the product variant at station entry, you have roughly 120 milliseconds before the reject mechanism at the end of that station needs a go/no-go signal. Miss that window and you're either letting bad parts through or nuking production throughput with false holds. That constraint alone shapes almost every architectural decision in machine vision deployment, including the central question: does the inference run on a local edge module, or does it round-trip to a central server?

We've found that this question gets debated at the infrastructure level when it should be settled at the timing level. Start with the physics of the problem. A round-trip to a server on the same plant LAN can range from 8 to 40 milliseconds under load, but that number assumes the network is well-managed and lightly contended. On a manufacturing floor with EtherNet/IP traffic from PLCs, SCADA polling, and potentially camera streams from multiple stations, real-world latency spikes are common. Add 40-80ms for actual model inference on shared server hardware (depending on GPU allocation and batch queuing), and you're already at 60-120ms before accounting for any jitter. For a station running at 30 parts per minute, that math gets uncomfortable fast.

Why Edge Compute Changes the Timing Equation

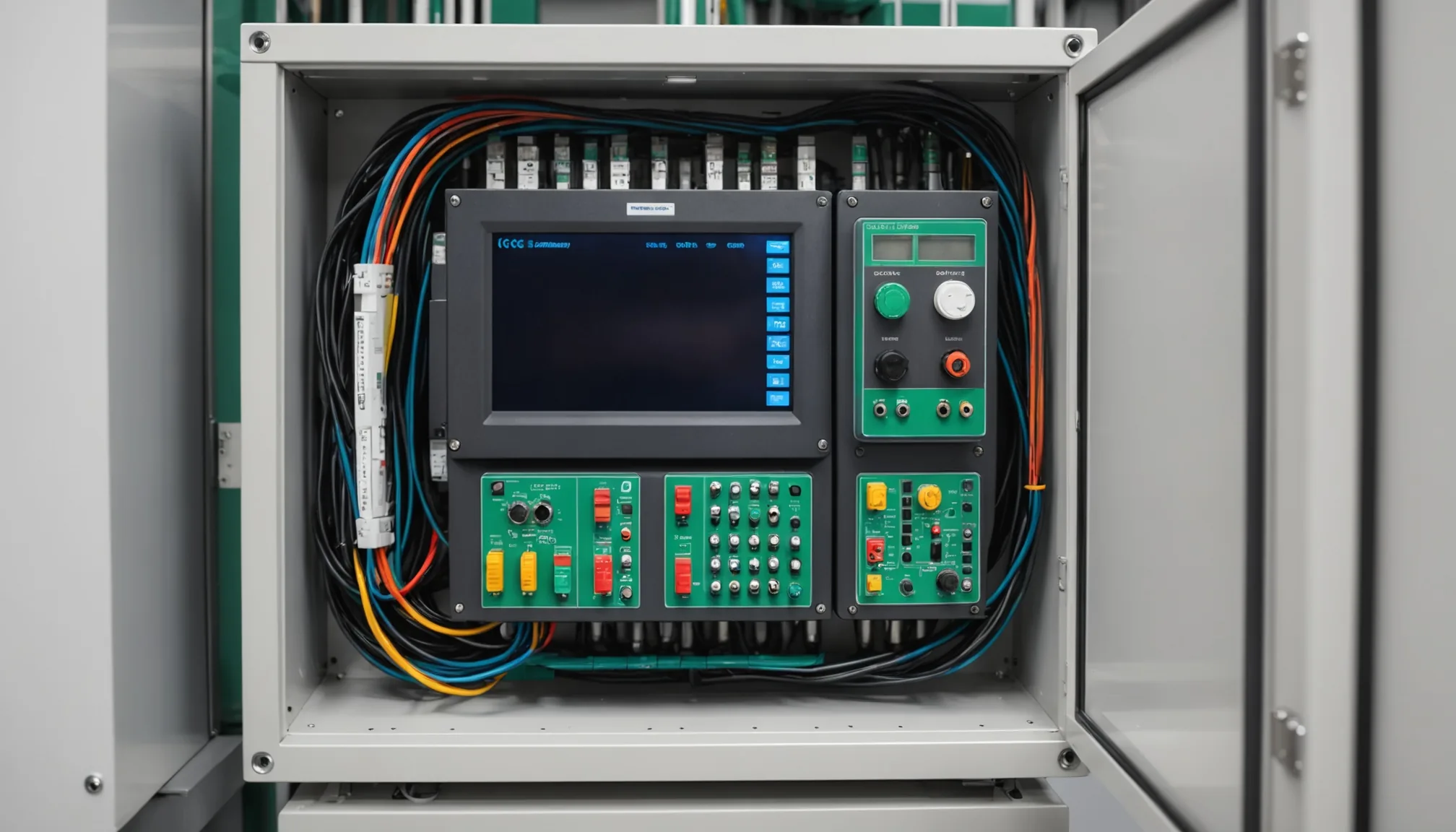

An industrial edge module positioned at the inspection station eliminates the network round-trip entirely. Inference happens locally, and the reject signal goes directly over EtherNet/IP to the PLC. Latency for the full cycle, from camera frame capture through model inference to PLC signal, sits consistently under 90ms on current-generation edge hardware. That's a 30-40% margin against the 120ms budget. Consistent. Not average.

That consistency matters more than the raw number. Quality engineers tell us that intermittent latency is the failure mode that's hardest to diagnose after the fact. A part that gets through on a network spike leaves no evidence it was inspected late. Central-server architectures introduce that variability structurally, especially during shift changes when SCADA polling and operator logins spike simultaneously.

There's a bandwidth dimension too. A line running four cameras at 5 megapixels each, capturing 30 frames per second, generates around 600MB/s of raw image data. Most manufacturing network infrastructure wasn't designed for that kind of sustained throughput, and even where it can handle it, dedicating that bandwidth to a single inspection station leaves little headroom for everything else on the floor. Edge inference means only the inspection result, a few bytes, travels the network rather than the image stream.

Multi-Variant Lines and Model Library Management

Single-product lines are a reasonable match for central-server inference. The architecture is simpler, and latency can be managed with dedicated GPU allocation. The problem compounds when you add variant complexity.

A mid-size manufacturer running 12-20 product variants on a shared line needs a different model loaded for each variant. Each model encodes the unique defect signatures, dimensional tolerances, and surface characteristics for that specific SKU. Sharing models across variants degrades detection rates, particularly for subtle surface defects that differ by a few microns between variants. The variant-switch latency on a central server, which must flush one model from GPU memory and load another, can run 800ms to 3 seconds depending on model size and memory pressure. During that window, parts can enter the inspection zone before the correct model is ready.

On an edge module, the full model library for a product family lives in local storage. Typical model sizes for a single-station inspection task run 200-400MB. A library of 20 variants takes 4-8GB, well within the storage capacity of current edge hardware. When a barcode triggers a variant switch, the correct model loads from local NVMe storage in under 200ms. That's fast enough to be invisible to the line.

In practice, we see manufacturers try to minimize model count by clustering variants into inspection groups. That approach has real costs: cluster boundaries have to be drawn conservatively, which means models are trained on a broader range of acceptable variation, which means defect sensitivity drops at the edges of each cluster. The local model library approach lets you maintain per-variant fidelity without that tradeoff.

Model Update Workflows and Maintenance Windows

One of the legitimate concerns with edge deployment is update complexity. With a central server, you push a new model once and all stations see it. With edge modules, you're pushing to N devices across the floor. That concern is valid but solvable.

The update cadence for an inspection model is typically tied to production events: a new product variant introduction, a supplier change that shifts raw material characteristics, or an active learning retraining cycle after quality engineers flag a batch of false positives. These events happen on a schedule. They're not continuous. Planned maintenance windows, usually overnight or during scheduled downtime between shifts, are the right time for model updates on edge devices.

The active learning loop is worth addressing specifically. When an inspection system collects borderline images and submits them for human review, the retrained model needs to reach the edge module before the next production run. A typical retraining cycle, if the active learning queue is reviewed promptly, runs 2-6 hours. That fits cleanly within overnight maintenance windows. The practical constraint is having a reliable device management layer that can push model updates to all modules, verify checksums, and roll back on failure, without requiring manual intervention at each station. Standard over-the-air update frameworks handle this. Industrial device management is not new.

What's less commonly discussed is partial revalidation. In a central-server architecture, updating a model for one variant technically requires re-qualifying the entire inference pipeline, since the shared environment changed. On an edge module, you can treat each station as an isolated qualified system. A model update for variant B doesn't affect the qualified state of variant A's model on the same device, provided the inference runtime itself didn't change. Quality engineers tell us that this isolation simplifies their change control documentation significantly.

Where Central-Server Architecture Still Makes Sense

Edge isn't universal. For inspection tasks where latency requirements are relaxed, say, final audit inspection with a human checkpoint downstream, or low-throughput specialty lines running under 10 parts per minute, a central server can work well. The economics favor centralization when GPU utilization would be low per station: a single GPU handling multiple low-throughput stations beats deploying edge hardware to each one.

Central architectures also simplify certain logging requirements. When all inference runs on one server, audit logs consolidate naturally. On edge deployments, you need a logging strategy that aggregates results from multiple devices into a single quality record system. Solvable, but it adds integration work upfront.

The decision framework is actually straightforward once you lay out the constraints explicitly. High throughput, strict latency budget, many variants, large model libraries: edge wins. Low throughput, relaxed timing, few variants, strong need for centralized logging: central server is defensible. Most mid-size manufacturers running complex product mixes land firmly in the first category.

Fitting Active Learning Into an Edge Maintenance Cadence

Active learning is where edge deployment requires the most operational discipline. The loop has four steps: collect borderline images during production, batch them to the review queue, retrain on validated labels, push the updated model to edge devices. Each step introduces latency. On a busy line generating 50-100 borderline images per shift, review queues can grow faster than quality teams can clear them.

We've found that the right cadence for active learning in an edge context is weekly model updates for lines with stable production, with an escalation path for immediate updates when a new defect type is identified. That means quality teams review flagged images daily, but retraining and deployment happen on a schedule rather than continuously. Continuous deployment to edge devices creates its own change control burden.

The other discipline is storage management on the edge module. Borderline images should be compressed and forwarded to central storage promptly rather than accumulated locally. A single station generating 100 flagged images per shift at 5MP adds up quickly against edge storage limits. Automated transfer policies keep local storage clear without manual intervention.

None of this is architecturally complex. It's operational discipline applied to a distributed system. Manufacturing operations teams understand distributed systems, they run them on the floor every day with PLCs, sensors, and SCADA. The edge compute model for machine vision inference is less novel than it appears at first.

The 120ms latency budget isn't arbitrary. It's the physics of the line. Edge compute is the architecture that respects those physics consistently, across shifts, across variant switches, and across the retraining cycles that keep models calibrated to real production conditions. That's the case for it, and it's enough.

Evaluating machine vision architecture for a multi-variant line? Talk to our team about inspection latency requirements and edge deployment options.