Adding AI Defect Detection to Cognex and Keyence Installations

Mid-size manufacturers with Cognex In-Sight or Keyence IV2 cameras can add AI surface defect detection without replacing or reconfiguring the existing installation.

Most mid-size manufacturers we talk to have the same thing on their inspection line: a Cognex In-Sight or Keyence IV2 smart camera, installed somewhere between 2016 and 2022, doing exactly what it was configured to do. Presence checks. Gross dimensional checks. Pattern matching on connector positions or label placement. Fast, reliable, deterministic. Nobody wants to touch it.

The problem is surface defects. Scratches, porosity, micro-cracks, cosmetic variation tied to supplier lot changes. Rule-based vision systems are not built for that class of problem. You can spend weeks tuning a Cognex In-Sight filter set to catch a new scratch pattern and still miss 15 percent of escapes in production. Every quality engineer we have spoken to knows this frustration personally.

The fix is not ripping out the Cognex or Keyence installation. That is expensive, disruptive, and unnecessary. The fix is layering AI inference on top of the existing camera, running in parallel, without touching the rule-based configuration at all.

What Cognex In-Sight and Keyence IV2 Do Well

To be direct: Cognex In-Sight and Keyence IV2 are excellent tools for the jobs they were designed for. Presence/absence detection at cycle times under 50 ms. Dimensional checks to sub-millimeter tolerances. OCR for date codes and serial numbers. Pattern matching for connector orientation and assembly completeness.

They are also operationally mature. The PLC knows how to read their pass/fail outputs. Your line operators know what the indicator lights mean. Your maintenance team can swap a camera head without calling an integrator. That accumulated infrastructure has real value. Don't discard it.

Where they structurally fail is anything requiring learned visual judgment. Surface defect detection is the clearest example. Scratches vary in orientation, depth, and length. Porosity clusters differ by casting lot and ambient temperature. The exact defect signature that causes a functional failure in one product variant may be cosmetically acceptable on another. Rule-based systems cannot hold all of that simultaneously. AI can, and the two approaches address different halves of the inspection problem.

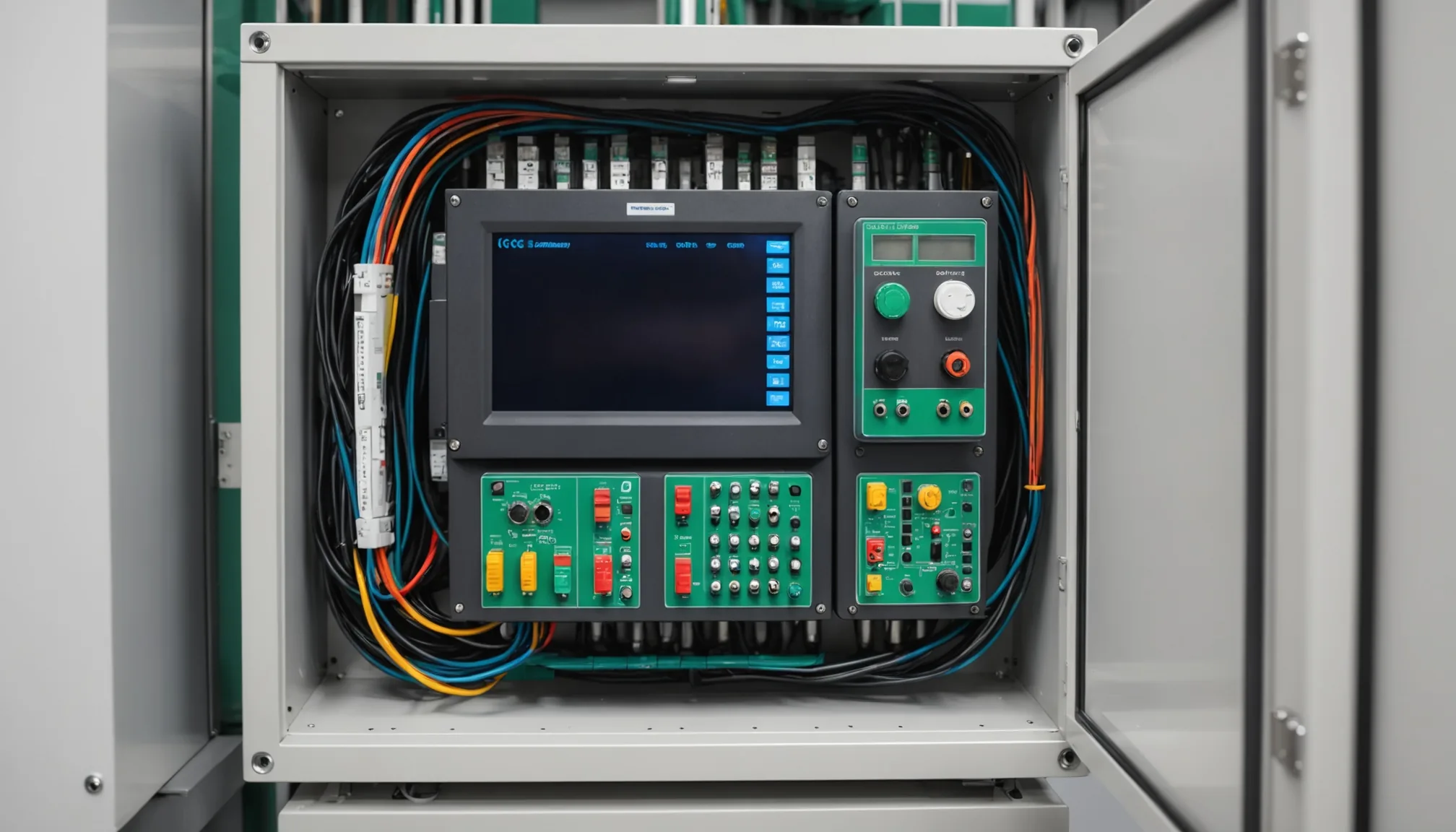

The Parallel-Layer Architecture

The integration pattern we use puts AI inference in a parallel lane. Not a replacement lane. The Cognex or Keyence camera continues running its existing job. Its outputs go to the PLC exactly as before. The AI inference layer intercepts the same image feed independently, runs its own defect detection model, and sends a separate signal to the PLC. Two votes. Neither overrides the other.

Practically, the architecture looks like this:

- The existing smart camera triggers on the same part-present sensor it always has.

- The AI inference host (typically an edge compute unit at the station) subscribes to the image output via SDK or Ethernet.

- AI inference runs asynchronously and completes within the existing cycle time budget.

- The PLC receives both outputs and can be configured to reject on either fail condition independently.

The line keeps running at the same speed. The Cognex or Keyence program is never modified. If the AI inference layer goes offline for maintenance or a model update, the existing rule-based inspection continues without interruption. Additive, not load-bearing. That last point matters a great deal for uptime-sensitive lines where any inspection gap creates a traceability problem.

SDK Integration: Cognex In-Sight

Cognex In-Sight cameras expose image data through the In-Sight SDK (available in .NET and C++) and also through a socket-based protocol called the Native Mode Interface. For AI integration, we use the Native Mode Interface to pull the raw image after each acquisition. The workflow is:

- Connect to the camera's TCP/IP port (default 23) using Telnet-compatible commands.

- Issue the

IEcommand (Image Export) to retrieve the most recently acquired image as a bitmap. - Push the image to the AI inference host over a local Ethernet connection.

- Return the inference result via a PLC-compatible digital output (discrete I/O or Modbus TCP).

Latency from image export to inference result is typically 80 to 140 ms on a GPU-equipped edge unit, well within the cycle time window of most assembly and machined-part inspection stations. For stations with tighter cycle budgets, FPGA-based inference cards can push that under 40 ms.

One practical note: In-Sight cameras running older firmware (pre-5.7) have image export rate limitations that can create queue buildup at high cycle rates. Verify firmware version before specifying the architecture. We have hit this twice on older In-Sight 5100 installations and it added a day of unplanned rework each time.

SDK Integration: Keyence IV2

Keyence IV2 cameras communicate via their IV Communication Library (Windows SDK) or directly through UDP/TCP Ethernet commands. For AI integration, we use the Ethernet image output. The IV2 supports image output in BMP format over a dedicated Ethernet port separate from the control network. Setup steps:

- Enable image output in the IV2 system settings (disabled by default, requires a brief offline configuration change).

- Configure the output destination IP address to point to the AI inference host.

- On the inference host, bind a listener to the specified UDP port and ingest incoming image frames.

- Return the AI result via a separate digital I/O connection or Ethernet trigger to the PLC.

The one-time offline configuration step is the only modification you will make to the Keyence system. Under ten minutes. After that, the IV2 continues running its inspection job and simultaneously pushes image data to the AI host. Neither output depends on the other at runtime.

Capital Cost: Parallel Layer vs. Second Inspection Station

The question we hear from plant engineering teams is always: "Why not just add a second dedicated AI camera at the station?" Fair question. Here is the actual cost breakdown for a typical single-station upgrade.

| Option | Hardware Cost | Integration Time | Line Downtime |

|---|---|---|---|

| AI layer on existing camera | Edge compute unit: $4,000-$8,000 | 2-4 days | 4-8 hours |

| Dedicated second AI inspection station | Camera + lighting + fixture + compute: $18,000-$35,000 | 3-6 weeks | 2-5 days |

The parallel AI layer typically runs 4 to 6 times cheaper than adding a dedicated inspection station, with a fraction of the integration time. For a manufacturer running 4 to 6 inspection stations, that cost difference becomes significant at a seed-stage capital budget.

Commissioning Lessons

In our experience commissioning hybrid AI-plus-rule-based setups, three issues surface more than anything else.

Lighting consistency. The existing Cognex or Keyence installation was tuned to a specific lighting setup. AI defect models are also sensitive to illumination changes. If lighting at the station drifts (bulb aging, fixture angle shifts, ambient light variation by shift), your AI model will generate more false positives earlier than you expect. Build lighting verification into the weekly PM checklist from day one. Not optional.

Model revalidation on variant changeover. A defect model trained on Part A is not guaranteed to perform correctly on Part B, even if they look similar. During commissioning, we catalog every active part number at the station and train or validate a separate model for each. That is the startup cost. The payoff is that changeover at runtime becomes a model-swap, not a re-commissioning. Typically 4 to 12 hours of training data per variant, depending on defect complexity and image volume available.

PLC output logic for dual-system voting. The existing Cognex or Keyence pass/fail output goes to one PLC input. The AI output goes to a second. How the PLC aggregates those two votes must be specified before integration, not after. The most common configuration is OR logic on fail: reject if either system flags the part. Some lines use AND logic for cosmetic-only defect categories to reduce false reject rate during model burn-in. Either approach is valid. Decide before you wire it up.

Practical note: we always recommend running the AI layer in monitor mode for the first two weeks after commissioning. The PLC reads the AI output but does not act on it. You accumulate a production sample, compare AI calls against human inspection records, and tune the confidence threshold before the AI output controls any physical reject path. Two weeks of monitor data has caught model tuning issues that would have caused significant false-reject rate problems if we had gone live immediately.

What This Architecture Does Not Cover

To be clear about scope: the parallel AI layer handles surface defect detection on the image the camera already captures. It does not give your Cognex or Keyence camera new viewing angles, change the field of view, or fix lighting problems that compromise the existing rule-based inspection. If the current installation has a coverage gap on a particular part surface, address that separately before adding the AI layer. The AI layer amplifies what the camera already sees. It does not compensate for fundamental optical setup deficiencies.

It also does not replace periodic revalidation of the Cognex or Keyence program itself. Those systems need their own maintenance cycle tied to part drawing revisions and tolerance changes. Keep that separate from the AI layer's validation cadence.

Getting Started

The practical starting point is an audit of your existing installation: camera model, firmware version, current inspection job parameters, cycle time, and PLC I/O map. That audit typically takes one day and produces the integration specification. From specification to first AI inference running on the line is typically 3 to 5 days of integration work, plus the model training period for your active part numbers.

For most mid-size manufacturers, the fastest path to AI defect detection is not a new system. It is a better use of the one already running.

Want to evaluate your current Cognex or Keyence installation for an AI upgrade?

Contact our applications engineering team to schedule a station audit. We will assess compatibility, identify the integration path, and give you a realistic timeline and cost estimate.