Running AI Inference on National Instruments Image Acquisition Hardware

NI FlexRIO and NI Vision hardware can serve as the image acquisition layer for AI defect detection without replacing existing NI infrastructure.

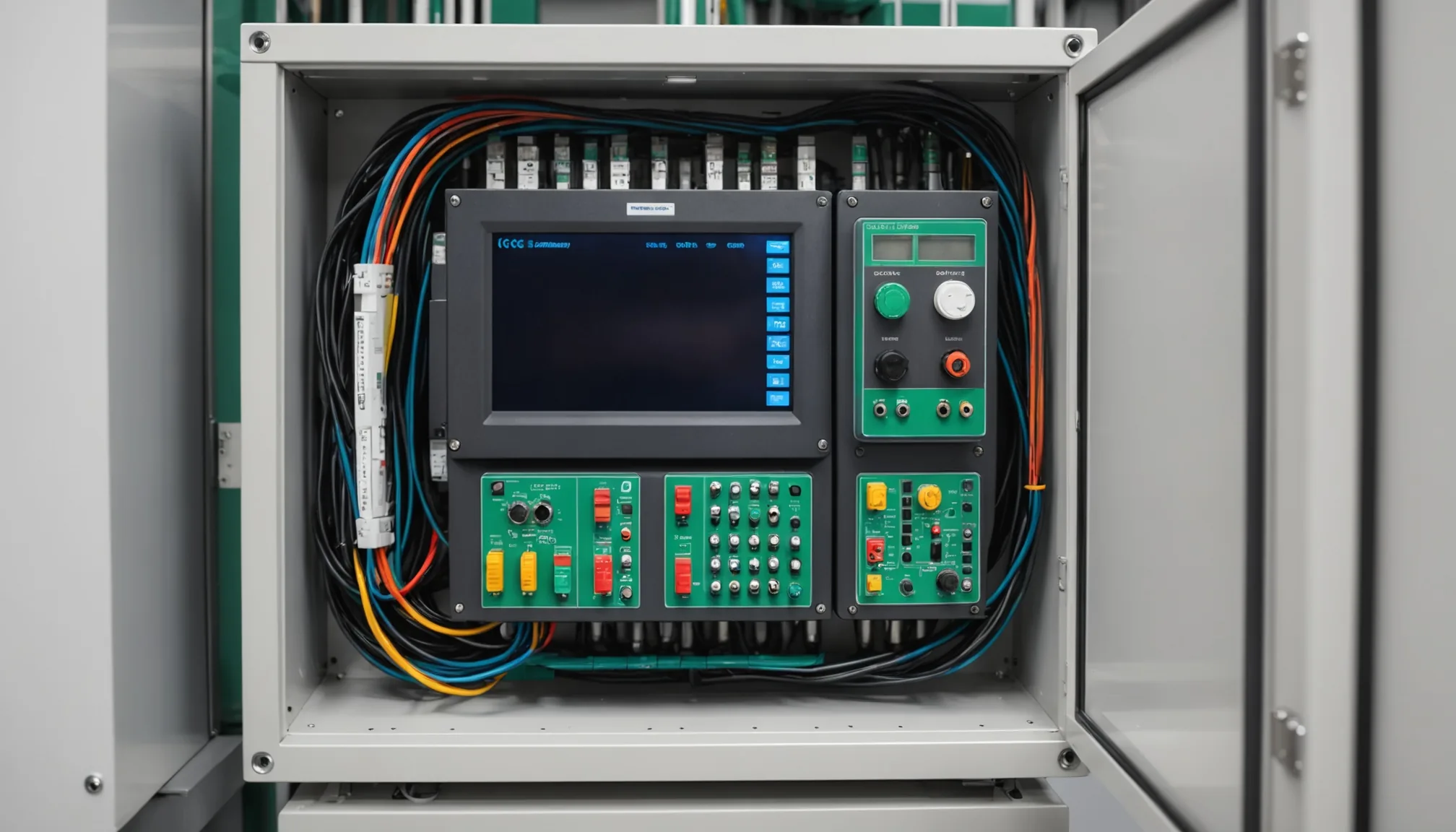

NI hardware is everywhere in precision manufacturing. The FlexRIO chassis, the NI Vision Acquisition Software stack, GigE Vision frame grabbers wired into machine frames that cost more than most people's houses. Plants running discrete electronics inspection, defense subassembly verification, precision fabrication quality checks. They've built these systems over years, and the NI ecosystem is deeply integrated into their test and measurement infrastructure.

When those same plants want to add AI defect detection, the first instinct is often to rip and replace. That instinct is wrong. In our experience, a better approach is to treat the existing NI acquisition layer as exactly what it is: a high-quality, calibrated image source. The AI inference layer runs on top of it. You don't have to touch the hardware.

Why NI Hardware Dominates These Environments

Consumer-grade GigE cameras max out around 60-90 fps at 5MP. That's fine for some applications. Not fine for high-speed web inspection, multi-spectral verification of soldering quality, or line-scan acquisition on a moving conveyor belt running at 2 meters per second.

NI FlexRIO systems with camera link or CoaXPress interfaces can sustain acquisition at 200fps and above. The FPGA layer handles real-time preprocessing: flat-field correction, lens distortion compensation, trigger synchronization with PLC signals. That preprocessing happens in hardware before a single frame hits memory. Industrial lines can't afford the jitter you get from software-based preprocessing on a general-purpose CPU.

Multispectral acquisition is the other capability that matters. A standard RGB camera won't catch certain solder void patterns or subsurface micro-fractures. NI Vision hardware paired with structured light or NIR illumination gives you image channels that carry information a consumer sensor can't see. We've seen this configuration used in medical device subassembly, aerospace connector inspection, and PCB population verification. The acquired image data is qualitatively different. Richer. That actually makes AI training easier, not harder.

Where the Inference Layer Connects

Here's the thing: NI Vision Acquisition Software exposes acquired frames through NI-IMAQ or IMAQdx APIs. Those buffers are accessible from Python via the nidaqmx and nivision libraries, or through the NI-IMAQdx .NET wrapper if you're on a C# inference stack. You're not doing anything exotic. You're just reading frame buffers that NI already makes available.

The architecture splits cleanly at the buffer boundary. NI handles acquisition, frame timing, FPGA preprocessing, and trigger synchronization. Once a frame lands in the NI ring buffer, your inference process reads it, runs the model, and returns a pass/fail decision before the next trigger fires. At 200fps, you have 5ms per frame. That's tight. Modern GPU inference on a 640x640 crop of a 4K frame runs in 2-3ms on a mid-range industrial GPU. Fits.

The key design choice is buffer management. NI's ring buffer is finite. If your inference process can't drain frames fast enough, you lose frames. In practice, we've found that running a dedicated inference thread with a separate frame queue, pulling from NI's buffer on each acquisition interrupt, keeps pace at frame rates up to 180fps without drops. Above that you either need a faster GPU or you accept that some frames get skipped, which is usually fine for statistical sampling.

Practical note: Don't try to run inference inside the NI acquisition callback. That callback runs in the NI driver thread with strict timing constraints. Copy the frame out, signal a condition variable, let the inference thread do its work. Blocking in that callback causes acquisition faults.

GPU Offload vs. FPGA Processing: What Goes Where

This is where vision system engineers and ML engineers sometimes talk past each other. FPGA processing on NI FlexRIO is excellent for deterministic, latency-critical operations: threshold triggers, histogram generation, blob detection for presence/absence. Sub-millisecond, guaranteed. But FPGA logic is not the right place for a convolutional neural network with 25 million parameters.

GPU inference is the right tool for AI defect detection. The parallel arithmetic units in a modern GPU are purpose-built for the matrix multiply operations CNNs run on. An NVIDIA RTX 3000-series or an A2 in the industrial form factor delivers 10-30x throughput compared to running the same model on a server CPU. A10 cards are common in mid-size manufacturing deployments because they offer a good balance of memory capacity (24GB) and industrial supply chain availability.

The FPGA still earns its keep. Use it for pre-trigger qualification. A simple edge-detection pass on the FPGA can tell you whether a part is present in frame before sending that frame to GPU inference. If 40% of your frames are empty conveyor belt, you've just cut your inference load by 40%. Keeps the GPU from becoming the bottleneck.

What the Integration Looks Like in Practice

The integration experience is different depending on who's doing it.

For a quality engineer running the system day-to-day, none of this is visible. They see a pass/fail indicator on the HMI, defect images when something fails, and a log they can query for shift reports. The AI layer is invisible to them. That's by design. We've seen what happens when you surface too much model internals to quality teams: they stop trusting it and start second-guessing every flag. Keep the interface simple.

For a vision system engineer doing the integration, the main work is the interface layer between NI's buffer API and the inference service. On a recent deployment for a mid-size electronics manufacturer in the Midwest, the integration took about two weeks. Most of that time wasn't writing code. It was characterizing the illumination geometry, getting consistent frame normalization from the NI preprocessing pipeline, and building the calibration dataset the model needed for fine-tuning. The NI hardware itself was straightforward to interface. The IMAQdx Python bindings work.

Model retraining is the ongoing operational commitment. NI's acquisition setup means the training images come from the same sensor, same optics, same illumination as the production deployment. No domain shift between training data and production data. That's actually a significant advantage over factories that try to build training datasets from separate lab cameras. Consistent acquisition conditions compress the amount of labeled data needed to get a model performing reliably.

Performance Considerations at Scale

Running inference in parallel with acquisition puts load on the PCIe bus. NI frame grabbers typically use PCIe x4 or x8 slots. High-throughput AI inference requires PCIe bandwidth for tensor transfers between host memory and GPU. On systems with a single PCIe root complex, you can run into bandwidth contention. Not a problem at 60fps. At 200fps with 12MP frames, it can be. Plan your PCIe topology before you commit to a hardware spec. A dual-root-complex server chassis solves this cleanly, if that's within budget.

Memory footprint matters too. NI ring buffers allocate from host memory. A 20-frame ring buffer at 12MP 16-bit = roughly 480MB just for acquisition. Your inference process needs additional memory for batch queuing. On a system with 64GB RAM, this isn't a concern. On a smaller industrial PC with 16GB, it requires careful allocation planning. Size it out before procurement, not after.

Power and thermal are the unglamorous part of industrial AI. A GPU under inference load in a sealed industrial enclosure generates heat. Fast. Budget for proper thermal management. An A2 at full load draws 60W. In a standard DIN-rail cabinet with passive airflow, that'll cause thermal throttling inside 30 minutes. Active cooling, or a proper industrial GPU chassis.

What This Means for Your NI Investment

The case for keeping NI hardware isn't just financial, though the financial case is real. A FlexRIO chassis plus camera hardware represents $40,000 to $120,000 in acquisition infrastructure depending on configuration. Replacing it to add AI inspection doesn't make sense when the integration path is this clean.

The deeper reason is capability. NI Vision hardware was designed for demanding industrial acquisition. High frame rates. Deterministic timing. FPGA preprocessing. Multispectral support. Those capabilities are genuinely difficult to replicate with consumer-grade or mid-range industrial alternatives. For the inspection tasks where those capabilities matter, keeping NI and adding AI inference on top is the technically superior choice, not just the economically convenient one.

We're working with manufacturers who have NI infrastructure already in place and want to layer AI inspection on top without a facility-wide hardware refresh. That integration is achievable. The work is mostly in the software interface layer, the model training setup, and the operational handoff. The NI hardware itself doesn't need to change.

If you're evaluating whether your existing NI setup can support AI inference, the short answer is: almost certainly yes. The longer answer involves your specific frame rate, image dimensions, and latency requirements. That's a conversation worth having before you spec new hardware.

Evaluating how AI inspection fits with your existing vision infrastructure? Talk to our engineering team about your NI hardware configuration.